Reference: https://blog.keras.io/building-powerful-image-classification-models-using-very-little-data.html

https://github.com/abnera/image-classifier

https://github.com/abnera/image-classifier/blob/master/code/fine_tune.py

http://www.subsubroutine.com/sub-subroutine/2016/9/30/cats-and-dogs-and-convolutional-neural-networks

NOTE: THIS MAY BE MISLEADING BECAUSE I CAN’T USE TENSORFLOW AS HERE BUT THEANO ALTHOUGH I INTEND TO USE TENSORFLOW. PLS READ THE REST WHY.

- Make sure keras and tensorflow already installed.

- Download the data needed from Kaggle’s Dogs vs Cats. Download train.zip dan test1.zip

- Create a new directory ‘dogs-vs-cats-tf’

Then in the ‘dogs-vs-cats-tf’ directory create a new directory ‘data’

then in ‘data’ directory, create a few new dirs ‘train/dogs’, ‘train/cats’, ‘validation/dogs’ and ‘validation/cats’. - The first time to use keras and tensorflow, I just want to use 1000 samples for each class (1000 samples for dogs and 1000 samples for cats. although the original dataset had 12,500 cats and 12,500 dogs) for the training. We’d put them in their respective directories (data/train/dogs and data/train/cats).

Then we use additional 400 samples for each class as validation data, to evaluate our models. We’d put them in their respective directories (data/validation/dogs and data/validation/cats).

Here are the clear instruction:

– cat pictures index 0-999 in data/train/cats

– cat pictures index 1000-1399 in data/validation/cats

– dogs pictures index 0-999 in data/train/dogs

– dog pictures index 1000-1399 in data/validation/dogs

123456789101112131415161718192021222324Example: Dogs vs Cats (Directory Structure)data/train/dogs/dog.0.jpgdog.1.jpg...dog.999.jpgcats/cat.0.jpgcat.1.jpg...cat.999.jpgvalidation/dogs/dog.1000.jpgdog.1001.jpg...dog.1399.jpgcats/cat.1000.jpgcat.1001.jpg...cat.1399.jpg

- in ‘dogs-vs-cats-tf’ directory, create a new python file ‘dogs-vs-cats1.py’. Here is the content (ref: https://gist.github.com/fchollet/0830affa1f7f19fd47b06d4cf89ed44d).

123456789101112131415161718192021222324252627282930313233343536373839404142434445464748495051525354555657585960616263646566676869707172737475767778798081828384858687888990919293949596979899100101102103104105106107108109110111112113114115116117118'''This script goes along the blog post"Building powerful image classification models using very little data"from blog.keras.io.It uses data that can be downloaded at:https://www.kaggle.com/c/dogs-vs-cats/dataIn our setup, we:- created a data/ folder- created train/ and validation/ subfolders inside data/- created cats/ and dogs/ subfolders inside train/ and validation/- put the cat pictures index 0-999 in data/train/cats- put the cat pictures index 1000-1399 in data/validation/cats- put the dogs pictures index 0-999 in data/train/dogs- put the dog pictures index 1000-1399 in data/validation/dogsSo that we have 1000 training examples for each class, and 400 validation examples for each class.In summary, this is our directory structure:```data/train/dogs/dog.0.jpgdog.1.jpg...dog.999.jpgcats/cat.0.jpgcat.1.jpg...cat.999.jpgvalidation/dogs/dog.1000.jpgdog.1001.jpg...dog.1399.jpgcats/cat.1000.jpgcat.1001.jpg...cat.1399.jpg```'''from keras.preprocessing.image import ImageDataGeneratorfrom keras.models import Sequentialfrom keras.layers import Convolution2D, MaxPooling2Dfrom keras.layers import Activation, Dropout, Flatten, Dense# dimensions of our images.img_width, img_height = 150, 150train_data_dir = 'data/train'validation_data_dir = 'data/validation'nb_train_samples = 2000nb_validation_samples = 800nb_epoch = 50model = Sequential()model.add(Convolution2D(32, 3, 3, input_shape=(3, img_width, img_height)))model.add(Activation('relu'))model.add(MaxPooling2D(pool_size=(2, 2)))model.add(Convolution2D(32, 3, 3))model.add(Activation('relu'))model.add(MaxPooling2D(pool_size=(2, 2)))model.add(Convolution2D(64, 3, 3))model.add(Activation('relu'))model.add(MaxPooling2D(pool_size=(2, 2)))model.add(Flatten())model.add(Dense(64))model.add(Activation('relu'))model.add(Dropout(0.5))model.add(Dense(1))model.add(Activation('sigmoid'))model.compile(loss='binary_crossentropy',optimizer='rmsprop',metrics=['accuracy'])# this is the augmentation configuration we will use for trainingtrain_datagen = ImageDataGenerator(rescale=1./255,shear_range=0.2,zoom_range=0.2,horizontal_flip=True)# this is the augmentation configuration we will use for testing:# only rescalingtest_datagen = ImageDataGenerator(rescale=1./255)train_generator = train_datagen.flow_from_directory(train_data_dir,target_size=(img_width, img_height),batch_size=32,class_mode='binary')validation_generator = test_datagen.flow_from_directory(validation_data_dir,target_size=(img_width, img_height),batch_size=32,class_mode='binary')model.fit_generator(train_generator,samples_per_epoch=nb_train_samples,nb_epoch=nb_epoch,validation_data=validation_generator,nb_val_samples=nb_validation_samples)#model.load_weights('first_try.h5')model.save_weights('first_try.h5')

- Run the python file.

NOTE: In http://myprojects.advchaweb.com/index.php/2017/02/22/installation-of-opencv-keras-and-tensorflow-on-ubuntu-14-04/, I use virtualenv ‘opencv_keras_tf’ and installed keras and tensorflow there. Use the virtualenv! The first time I forgot to use the virtualenv then I got this error when I tried to run the python file

12345teddy@teddy-K43SJ:~/Documents/python/dogs-vs-cats-tf$ python dogs-vs-cats1.pyTraceback (most recent call last):File "dogs-vs-cats1.py", line 43, in <module>from keras.preprocessing.image import ImageDataGeneratorImportError: No module named keras.preprocessing.image

The error ‘ImportError: No module named keras.preprocessing.image’ because I ONLY install keras in virtualenv ‘opencv_keras_tf’ NOT GLOBALLY!

OK. Use virtualenv ‘opencv_keras_tf’ then run the python file.

1teddy@teddy-K43SJ:~/Documents/python/dogs-vs-cats-tf$ workon opencv_keras_tf

123456789101112131415161718192021222324252627282930313233343536373839404142434445464748(opencv_keras_tf) teddy@teddy-K43SJ:~/Documents/python/dogs-vs-cats-tf$ python dogs-vs-cats1.pyUsing TensorFlow backend.I tensorflow/stream_executor/dso_loader.cc:111] successfully opened CUDA library libcublas.so locallyI tensorflow/stream_executor/dso_loader.cc:111] successfully opened CUDA library libcudnn.so locallyI tensorflow/stream_executor/dso_loader.cc:111] successfully opened CUDA library libcufft.so locallyI tensorflow/stream_executor/dso_loader.cc:111] successfully opened CUDA library libcuda.so.1 locallyI tensorflow/stream_executor/dso_loader.cc:111] successfully opened CUDA library libcurand.so locallyTraceback (most recent call last):File "/home/teddy/.virtualenvs/opencv_keras_tf/lib/python3.4/site-packages/tensorflow/python/framework/common_shapes.py", line 594, in call_cpp_shape_fnstatus)File "/usr/lib/python3.4/contextlib.py", line 66, in __exit__next(self.gen)File "/home/teddy/.virtualenvs/opencv_keras_tf/lib/python3.4/site-packages/tensorflow/python/framework/errors.py", line 463, in raise_exception_on_not_ok_statuspywrap_tensorflow.TF_GetCode(status))tensorflow.python.framework.errors.InvalidArgumentError: Negative dimension size caused by subtracting 2 from 1During handling of the above exception, another exception occurred:Traceback (most recent call last):File "dogs-vs-cats1.py", line 62, in <module>model.add(MaxPooling2D(pool_size=(2, 2)))File "/home/teddy/.virtualenvs/opencv_keras_tf/lib/python3.4/site-packages/keras/models.py", line 332, in addoutput_tensor = layer(self.outputs[0])File "/home/teddy/.virtualenvs/opencv_keras_tf/lib/python3.4/site-packages/keras/engine/topology.py", line 572, in __call__self.add_inbound_node(inbound_layers, node_indices, tensor_indices)File "/home/teddy/.virtualenvs/opencv_keras_tf/lib/python3.4/site-packages/keras/engine/topology.py", line 635, in add_inbound_nodeNode.create_node(self, inbound_layers, node_indices, tensor_indices)File "/home/teddy/.virtualenvs/opencv_keras_tf/lib/python3.4/site-packages/keras/engine/topology.py", line 166, in create_nodeoutput_tensors = to_list(outbound_layer.call(input_tensors[0], mask=input_masks[0]))File "/home/teddy/.virtualenvs/opencv_keras_tf/lib/python3.4/site-packages/keras/layers/pooling.py", line 160, in calldim_ordering=self.dim_ordering)File "/home/teddy/.virtualenvs/opencv_keras_tf/lib/python3.4/site-packages/keras/layers/pooling.py", line 210, in _pooling_functionpool_mode='max')File "/home/teddy/.virtualenvs/opencv_keras_tf/lib/python3.4/site-packages/keras/backend/tensorflow_backend.py", line 2866, in pool2dx = tf.nn.max_pool(x, pool_size, strides, padding=padding)File "/home/teddy/.virtualenvs/opencv_keras_tf/lib/python3.4/site-packages/tensorflow/python/ops/nn_ops.py", line 850, in max_poolname=name)File "/home/teddy/.virtualenvs/opencv_keras_tf/lib/python3.4/site-packages/tensorflow/python/ops/gen_nn_ops.py", line 1440, in _max_pooldata_format=data_format, name=name)File "/home/teddy/.virtualenvs/opencv_keras_tf/lib/python3.4/site-packages/tensorflow/python/framework/op_def_library.py", line 749, in apply_opop_def=op_def)File "/home/teddy/.virtualenvs/opencv_keras_tf/lib/python3.4/site-packages/tensorflow/python/framework/ops.py", line 2382, in create_opset_shapes_for_outputs(ret)File "/home/teddy/.virtualenvs/opencv_keras_tf/lib/python3.4/site-packages/tensorflow/python/framework/ops.py", line 1783, in set_shapes_for_outputsshapes = shape_func(op)File "/home/teddy/.virtualenvs/opencv_keras_tf/lib/python3.4/site-packages/tensorflow/python/framework/common_shapes.py", line 596, in call_cpp_shape_fnraise ValueError(err.message)ValueError: Negative dimension size caused by subtracting 2 from 1

I GOT AN ERROR: ‘ValueError: Negative dimension size caused by subtracting 2 from 1’

SOLUTION: Modify keras.json and replace ‘tf’ in ‘image_dim_ordering’ to ‘th’ (WHY ‘tf’ DIDN’T WORK???)

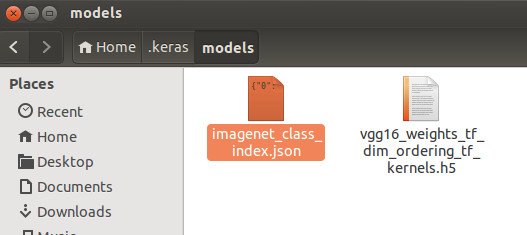

1(opencv_keras_tf) teddy@teddy-K43SJ:~/Documents/python/dogs-vs-cats-tf$ gedit ~/.keras/keras.json

SO HERE IS THE CONTENT OF ~/.keras/keras.json

123456{"backend": "tensorflow","image_dim_ordering": "th","epsilon": 1e-07,"floatx": "float32"}

RUN AGAIN (IT’S WORKING NOW):

START AT 2017-02-24 10:08PM

12345678910111213141516171819202122232425262728293031(opencv_keras_tf) teddy@teddy-K43SJ:~/Documents/python/dogs-vs-cats-tf$ python dogs-vs-cats1.pyUsing TensorFlow backend.I tensorflow/stream_executor/dso_loader.cc:111] successfully opened CUDA library libcublas.so locallyI tensorflow/stream_executor/dso_loader.cc:111] successfully opened CUDA library libcudnn.so locallyI tensorflow/stream_executor/dso_loader.cc:111] successfully opened CUDA library libcufft.so locallyI tensorflow/stream_executor/dso_loader.cc:111] successfully opened CUDA library libcuda.so.1 locallyI tensorflow/stream_executor/dso_loader.cc:111] successfully opened CUDA library libcurand.so locallyFound 2000 images belonging to 2 classes.Found 800 images belonging to 2 classes.Epoch 1/50I tensorflow/stream_executor/cuda/cuda_gpu_executor.cc:925] successful NUMA node read from SysFS had negative value (-1), but there must be at least one NUMA node, so returning NUMA node zeroI tensorflow/core/common_runtime/gpu/gpu_device.cc:951] Found device 0 with properties:name: GeForce GT 520Mmajor: 2 minor: 1 memoryClockRate (GHz) 1.48pciBusID 0000:01:00.0Total memory: 963.62MiBFree memory: 758.38MiBI tensorflow/core/common_runtime/gpu/gpu_device.cc:972] DMA: 0I tensorflow/core/common_runtime/gpu/gpu_device.cc:982] 0: YI tensorflow/core/common_runtime/gpu/gpu_device.cc:1014] Ignoring visible gpu device (device: 0, name: GeForce GT 520M, pci bus id: 0000:01:00.0) with Cuda compute capability 2.1. The minimum required Cuda capability is 3.0.32/2000 [..............................] - ETA: 536s - loss: 0.6856 - acc: 0.6...2000/2000 [==============================] - 589s - loss: 0.7349 - acc: 0.5115 - val_loss: 0.6872 - val_acc: 0.5563Epoch 2/5032/2000 [..............................] - ETA: 451s - loss: 0.6873 - acc: 0.5...2000/2000 [==============================] - 630s - loss: 0.6964 - acc: 0.5695 - val_loss: 0.6541 - val_acc: 0.6663Epoch 3/5032/2000 [..............................] - ETA: 499s - loss: 0.6306 - acc: 0.7...2000/2000 [==============================] - 599s - loss: 0.6610 - acc: 0.6070 - val_loss: 0.6264 - val_acc: 0.6412

NOTE: Read this about CUDA compute capability : http://stackoverflow.com/questions/10961476/what-are-the-differences-between-cuda-compute-capabilities

IT SEEMS DEPEND ON THE HARDWARE! NOTHING CAN DO TO IMPROVE THE COMPUTE CAPABILITY!

AFTER READING THIS https://www.tensorflow.org/versions/r0.10/get_started/os_setup#installing_from_sources AND http://stackoverflow.com/questions/38542763/how-can-i-make-tensorflow-run-on-a-gpu-with-capability-2-0, I GOT SAD NEWS THAT

“In order to build or run TensorFlow with GPU support, both NVIDIA’s Cuda Toolkit (>= 7.0) and cuDNN (>= v3) need to be installed.

TensorFlow GPU support requires having a GPU card with NVidia Compute Capability >= 3.0.”

SO MY GPU GeForce GT 520M THAT ONLY HAS CUDA COMPUTE CAPABILITY 2.1(REF: https://en.wikipedia.org/wiki/CUDA) CAN’T BE USED FOR TENSORFLOW!!!I ONLY CAN USE CPU!!!

I STOPPED AFTER THE 3rd EPOCH (2017-02-14 10:39) BECAUSE I HAVE GOT ANOTHER THING TO DO. HOPEFULLY CAN RESUME THIS TOMORROW.

I DID IT AGAIN!

1234567891011121314151617181920212223242526272829303132333435363738394041424344454647484950515253545556575859606162636465666768697071727374757677787980818283848586epoch 1/50 start at 2017-02-25 08:45PM2000/2000 [==============================] - 500s - loss: 0.6997 - acc: 0.5270 - val_loss: 0.6800 - val_acc: 0.5025Epoch 2/502000/2000 [==============================] - 533s - loss: 0.6652 - acc: 0.6100 - val_loss: 0.6326 - val_acc: 0.6613Epoch 3/502000/2000 [==============================] - 539s - loss: 0.6291 - acc: 0.6540 - val_loss: 0.6102 - val_acc: 0.6700Epoch 4/502000/2000 [==============================] - 559s - loss: 0.6141 - acc: 0.6875 - val_loss: 0.5782 - val_acc: 0.6863Epoch 5/502000/2000 [==============================] - 528s - loss: 0.5825 - acc: 0.6920 - val_loss: 0.6414 - val_acc: 0.6112Epoch 6/502000/2000 [==============================] - 526s - loss: 0.5719 - acc: 0.7085 - val_loss: 0.5651 - val_acc: 0.6750Epoch 7/502000/2000 [==============================] - 513s - loss: 0.5550 - acc: 0.7240 - val_loss: 0.5450 - val_acc: 0.7037Epoch 8/50...2000/2000 [==============================] - 495s - loss: 0.4445 - acc: 0.7960 - val_loss: 0.4813 - val_acc: 0.7788Epoch 17/502000/2000 [==============================] - 495s - loss: 0.4424 - acc: 0.7930 - val_loss: 0.4919 - val_acc: 0.7638Epoch 18/502000/2000 [==============================] - 495s - loss: 0.4343 - acc: 0.8065 - val_loss: 0.4890 - val_acc: 0.7625Epoch 19/502000/2000 [==============================] - 494s - loss: 0.4202 - acc: 0.8085 - val_loss: 0.5072 - val_acc: 0.7538Epoch 20/502000/2000 [==============================] - 493s - loss: 0.4146 - acc: 0.8230 - val_loss: 0.4561 - val_acc: 0.7900Epoch 21/502000/2000 [==============================] - 492s - loss: 0.4210 - acc: 0.8125 - val_loss: 0.5270 - val_acc: 0.7450Epoch 22/502000/2000 [==============================] - 493s - loss: 0.4179 - acc: 0.8065 - val_loss: 0.4678 - val_acc: 0.7925Epoch 23/502000/2000 [==============================] - 494s - loss: 0.3911 - acc: 0.8245 - val_loss: 0.4943 - val_acc: 0.7488Epoch 24/502000/2000 [==============================] - 493s - loss: 0.3944 - acc: 0.8230 - val_loss: 0.5489 - val_acc: 0.7612Epoch 25/502000/2000 [==============================] - 494s - loss: 0.3874 - acc: 0.8280 - val_loss: 0.5076 - val_acc: 0.7762Epoch 26/502000/2000 [==============================] - 493s - loss: 0.3863 - acc: 0.8320 - val_loss: 0.5106 - val_acc: 0.7550Epoch 27/502000/2000 [==============================] - 494s - loss: 0.3838 - acc: 0.8370 - val_loss: 0.5342 - val_acc: 0.7588Epoch 28/502000/2000 [==============================] - 491s - loss: 0.3684 - acc: 0.8400 - val_loss: 0.7603 - val_acc: 0.7400Epoch 29/502000/2000 [==============================] - 495s - loss: 0.3615 - acc: 0.8335 - val_loss: 0.5512 - val_acc: 0.7837Epoch 30/502000/2000 [==============================] - 490s - loss: 0.3677 - acc: 0.8370 - val_loss: 0.4612 - val_acc: 0.7950Epoch 31/502000/2000 [==============================] - 493s - loss: 0.3570 - acc: 0.8395 - val_loss: 0.4771 - val_acc: 0.7775Epoch 32/502000/2000 [==============================] - 494s - loss: 0.3334 - acc: 0.8625 - val_loss: 0.5000 - val_acc: 0.7963Epoch 33/502000/2000 [==============================] - 490s - loss: 0.3448 - acc: 0.8475 - val_loss: 0.4768 - val_acc: 0.7750Epoch 34/502000/2000 [==============================] - 493s - loss: 0.3495 - acc: 0.8535 - val_loss: 0.6233 - val_acc: 0.7412Epoch 35/502000/2000 [==============================] - 492s - loss: 0.3237 - acc: 0.8505 - val_loss: 0.5072 - val_acc: 0.8125Epoch 36/502000/2000 [==============================] - 490s - loss: 0.3285 - acc: 0.8565 - val_loss: 0.5616 - val_acc: 0.7875Epoch 37/502000/2000 [==============================] - 490s - loss: 0.3200 - acc: 0.8690 - val_loss: 0.7266 - val_acc: 0.7412Epoch 38/502000/2000 [==============================] - 491s - loss: 0.3317 - acc: 0.8595 - val_loss: 0.9790 - val_acc: 0.7113Epoch 39/502000/2000 [==============================] - 489s - loss: 0.3331 - acc: 0.8615 - val_loss: 0.5178 - val_acc: 0.7725Epoch 40/502000/2000 [==============================] - 489s - loss: 0.3212 - acc: 0.8655 - val_loss: 0.4486 - val_acc: 0.8087Epoch 41/502000/2000 [==============================] - 491s - loss: 0.3147 - acc: 0.8725 - val_loss: 0.5545 - val_acc: 0.7887Epoch 42/502000/2000 [==============================] - 488s - loss: 0.3199 - acc: 0.8650 - val_loss: 0.5090 - val_acc: 0.8087Epoch 43/502000/2000 [==============================] - 491s - loss: 0.3182 - acc: 0.8605 - val_loss: 0.5351 - val_acc: 0.7950Epoch 44/502000/2000 [==============================] - 490s - loss: 0.3030 - acc: 0.8665 - val_loss: 0.4939 - val_acc: 0.7963Epoch 45/502000/2000 [==============================] - 490s - loss: 0.3026 - acc: 0.8705 - val_loss: 0.6770 - val_acc: 0.7762Epoch 46/502000/2000 [==============================] - 488s - loss: 0.3124 - acc: 0.8625 - val_loss: 0.5910 - val_acc: 0.7850Epoch 47/502000/2000 [==============================] - 518s - loss: 0.3069 - acc: 0.8725 - val_loss: 0.5056 - val_acc: 0.8063Epoch 48/50 START AT 2017-02-26 03:14AM2000/2000 [==============================] - 526s - loss: 0.3038 - acc: 0.8790 - val_loss: 0.5111 - val_acc: 0.7937Epoch 49/502000/2000 [==============================] - 501s - loss: 0.2811 - acc: 0.8870 - val_loss: 0.4624 - val_acc: 0.8013Epoch 50/502000/2000 [==============================] - 497s - loss: 0.2817 - acc: 0.8815 - val_loss: 0.7820 - val_acc: 0.7662END AT 2017-02-26 03:45AM

START AT 2017-02-25 08:45PM AND END AT 2017-02-26 03:45AM. SO THE TRAINING PROCESS RUN FOR 7 HOURS!!! AND THE ACCURACY IS 88.15%???

AND AT THE END I GOT THIS ERROR

1234567891011121314151617Traceback (most recent call last):File "dogs-vs-cats1.py", line 117, in <module>model.load_weights('first_try.h5')File "/home/teddy/.virtualenvs/opencv_keras_tf/lib/python3.4/site-packages/keras/engine/topology.py", line 2702, in load_weightsf = h5py.File(filepath, mode='r')File "/home/teddy/.virtualenvs/opencv_keras_tf/lib/python3.4/site-packages/h5py/_hl/files.py", line 272, in __init__fid = make_fid(name, mode, userblock_size, fapl, swmr=swmr)File "/home/teddy/.virtualenvs/opencv_keras_tf/lib/python3.4/site-packages/h5py/_hl/files.py", line 92, in make_fidfid = h5f.open(name, flags, fapl=fapl)File "h5py/_objects.pyx", line 54, in h5py._objects.with_phil.wrapper (/tmp/pip-eeirwumi-build/h5py/_objects.c:2684)File "h5py/_objects.pyx", line 55, in h5py._objects.with_phil.wrapper (/tmp/pip-eeirwumi-build/h5py/_objects.c:2642)File "h5py/h5f.pyx", line 76, in h5py.h5f.open (/tmp/pip-eeirwumi-build/h5py/h5f.c:1930)OSError: Unable to open file (Unable to open file: name = 'first_try.h5', errno = 2, error message = 'no such file or directory', flags = 0, o_flags = 0)Exception ignored in: <bound method Session.__del__ of <tensorflow.python.client.session.Session object at 0x7f2363381d30>>Traceback (most recent call last):File "/home/teddy/.virtualenvs/opencv_keras_tf/lib/python3.4/site-packages/tensorflow/python/client/session.py", line 532, in __del__AttributeError: 'NoneType' object has no attribute 'TF_DeleteStatus'

ALSO I DONT FIND ‘first_try.h5’ FILE!!!! –> SOLUTION: In the file ‘dogs-vs-cats1.py’, replace ‘model.load_weights(‘first_try.h5′)’ with ‘model.save_weights(‘first_try.h5′)’ OR LIKE THIS:

123...#model.load_weights('first_try.h5')model.save_weights('first_try.h5')

NOW I GOT THE TRAINING FILE ‘first_try.h5’ IN ‘dogs-vs-cats-tf’ DIRECTORY!

NOTE: I ONLY SET ‘nb_epoch = 5’ JUST TO PROVE THE .h5 FILE WOULD BE CREATED OR NOT. SO THE ACCURACY IS NOT TOO GOOD (70.15%). HERE IS THE LAST LINE ON MY TERMINAL AT THE EPOCH 5TH

12000/2000 [==============================] - 558s - loss: 0.5839 - acc: 0.7015 - val_loss: 0.5949 - val_acc: 0.6813

OK. NOW HOW TO USE THE TRAINING FILE FOR TESTING!!!